Problem

Blind/visually impaired children (below age 10) aren't aware of how to navigate emotions.

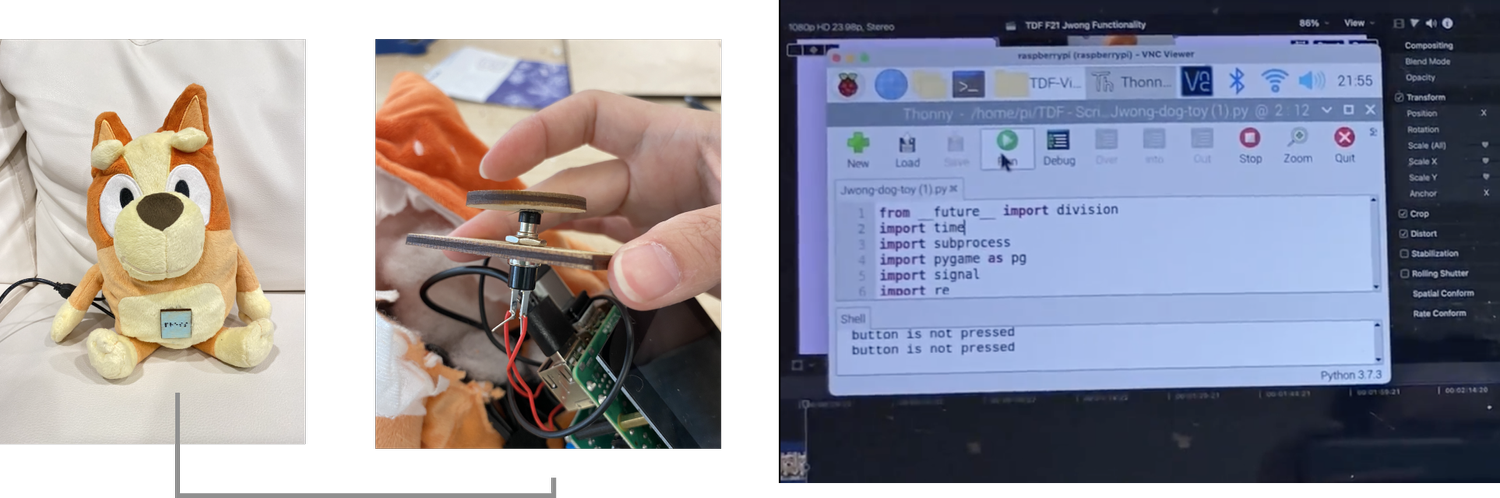

When children are distressed, they often turn to stuffed animals that comfort them. To support their accessibility needs, I worked on making it easily detectable to interact with the friendly toy, which responds to the child's emotions.

Output

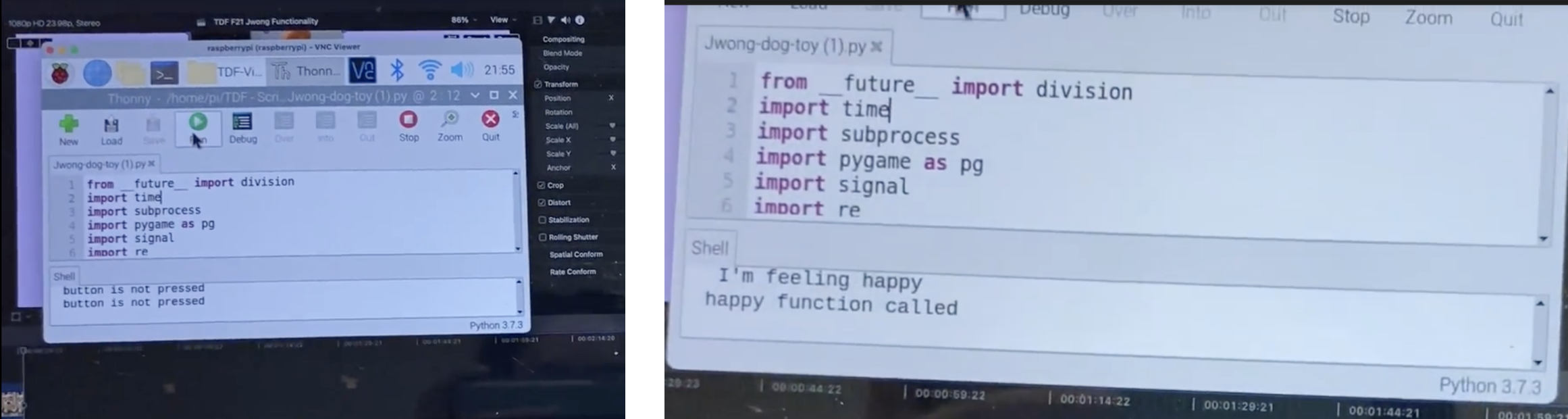

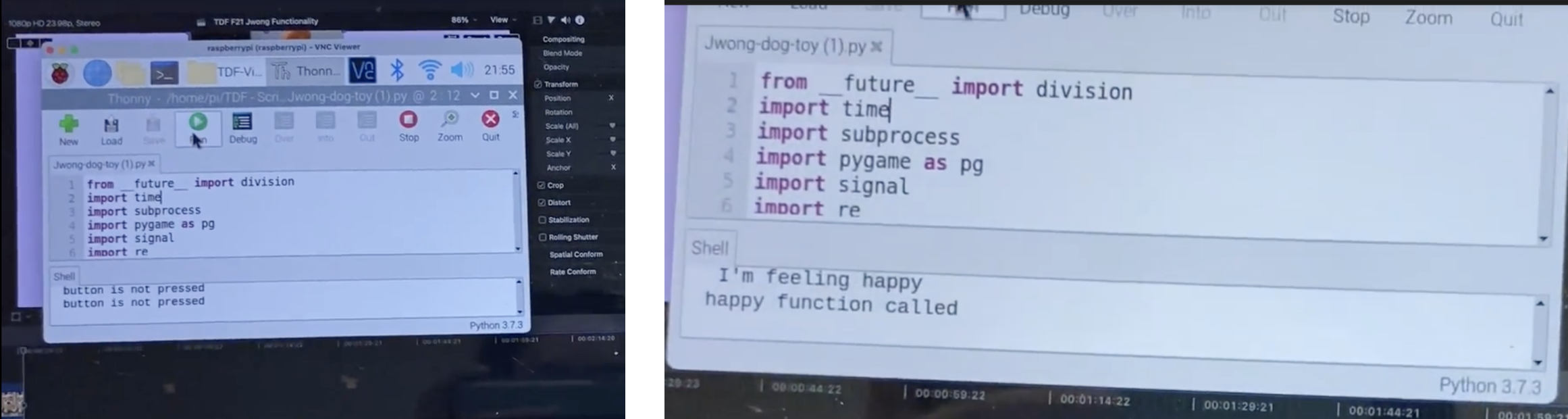

Designing conditional statements to provide tailored mental health strategies

Example mental health strategy: Input = Button, speech (“happy) Output = Bark sound (happy), Mental health strategy (happy) “I’m so happy that you’re feeling happy today. You should be proud of yourself. Give yourself a pat on the back.”

Solution

An interactive mental health toy that's engaging and helpful to blind children

We were given some starter code, and I modified/revised/added code of my own to produce the following demo.

Conclusion

Exploring and programming AI for the first time

This was uncharted territory for me, but I learned to tackle technical challenges in ways I never did before. I got to learn the mechanics of how AI works through NLP and build my own interactive product outside the usual digital realm, what I've been so used to for the past years.